Testing plays a critical role in the CI/CD. It’s a safe and accurate statement to make. But what types of testings should be conducted during the CI/CD lifecycle? We’ll take a look at testings that are involved throughout the lifecycle in this article.

CI/CD

Before drilling down to the testing types, let’s take a quick look at the CI/CD first. CI/CD concept is no stranger to people nowadays. So we won’t touch too much on the concept part. Let’s visualise the CI/CD lifecycle and see where the testing sits.

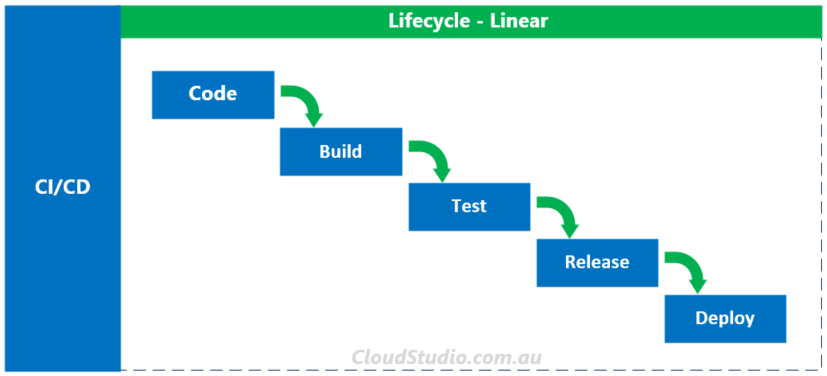

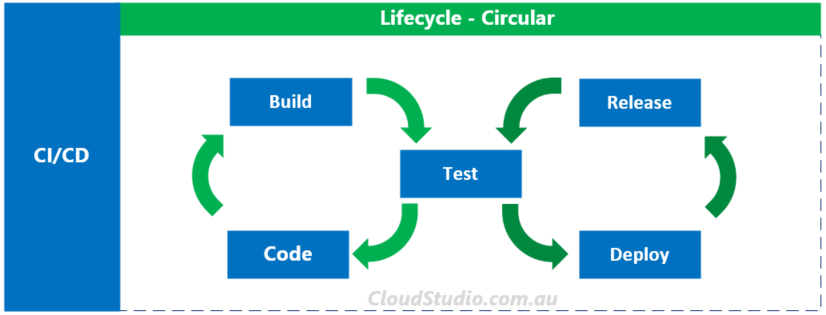

There are two popular lifecycle visualisations for CI/CD, linear and circular, as shown below:

We’ll not debate about which one is more accurate or the naming convention of the stages here. Instead, let’s focus on where the testing sits in both lifecycle styles. In the linear one, the testing is only represented at one stage. In the circular one, it’s represented at two stages, as it’s a shared stage for both CI and CD. The question is “Is that all?” What about unit testing during the code stage? What about testing in production, i.e. during the deploy stage?

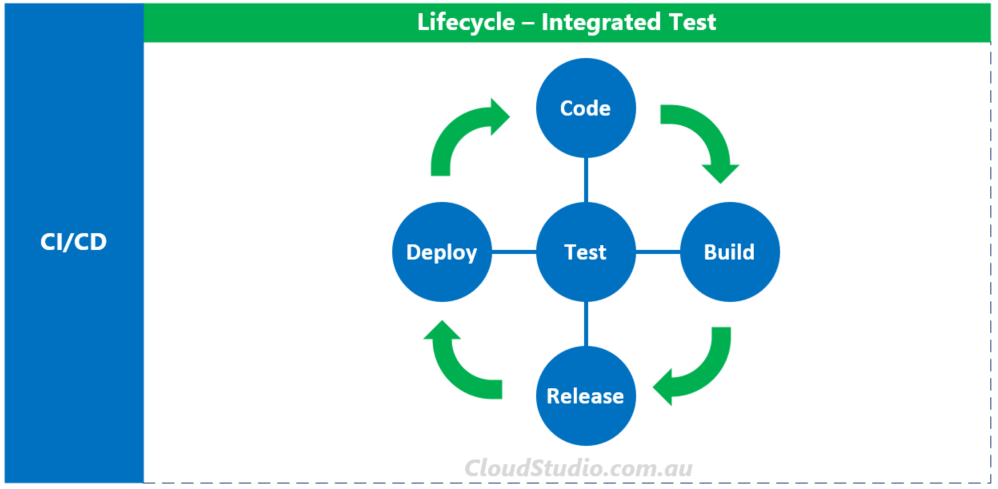

How about we present the CI/CD lifecycle with the testings integrated in each stage, like below?

From this angle to view it, testing is no longer a single stage. Instead, every stage of the CI/CD lifecycle should have testing involved. And based on the nature of each stage, different types of testings should be conducted accordingly. In the next section, we’ll explore available testing types across all lifecycle stages.

Testing Types

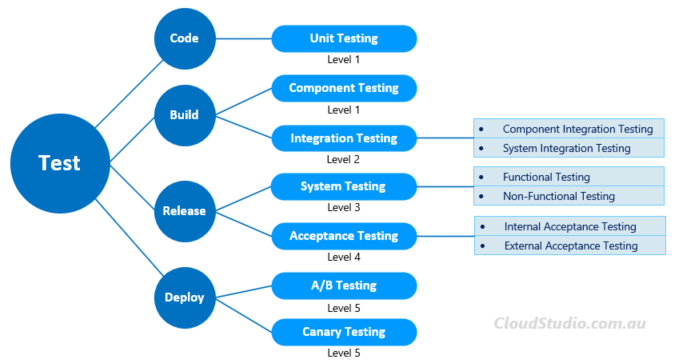

If we put different levels of testings in the Code, Build, Release and Deploy buckets, the mapping will look like below:

The traditional Software Testing Levels contains four levels, but we are extending it with one more level to map to the Deploy stage of the CI/CD lifecycle. We’ll shed more lights on each level in the following sections.

Code Phase

Unit Testing

Unit Testing focuses on testing a section of code or application that can be logically isolated. We consider the section of code or application as a “unit”. Developers conduct unit tests from their client end after completing their coding.

Another way to look at the unit testing is that it shouldn’t be relying on external systems. For the definition of the “external system”, we can borrow Michael Feathers’s statement in his “Working Effectively with Legacy Code” book. It says “If it talks to the database, it talks across the network, it touches the file system, it requires system configuration, or it can’t be run at the same time as any other test.”

Unit Testing is the first gate of testings. From the CI/CD lifecycle point of view, developers should conduct unit testing before committing the code to the code repository.

For details further details about the unit test, a recommended article is: Unit Testing in the Development Phase of the CI/CD Pipeline.

Build Phase

The build phase is when we test integration between different components and individual component themselves. It’s also important to test whether the code pushed from the code phase breaks any existing functions and features.

Component Testing

If Unit Testing targets at the minimum unit, e.g. a function, then the Component Testing targets at a bigger test objects, e.g. a module. A Component Testing is performed on individual component without integrating with other components. In this matter, it’s similar to Unit Testing.

Why can’t we merge Unit Testing and Component Testing together? In some situations, a number of developers need to work together to co-develop a module of a program. Each of them will conduct their own unit testing locally. But once they pushed their code to the code repository and merged their code together, they should conduct separate tests to test the co-developed module.

Having this layer of test again serves the testing principle of “Fail Fast”. The earlier we can find the defects, the less expensive to fix it.

Integration Testing

Integration Testing is performed to expose defects in the interactions between integrated components or systems. Based on the type of integration, we can test integrations between components within a system, or between two different systems.

- Component Integration Testing

The scope of this test is to expose defect in the interfaces and interactions between integrated components. - System Integration Testing

The scope of this test is wider, i.e. testing the integration between systems, either internal or external ones.

In the traditional waterfall practice, developers conduct integration testings after unit testings and component testings. In the CI/CD lifecycle, usually bond with Agile, integration testing may follow different best practices. This article provides good details on integration testing’s best practices under Agile development: 6 test practices for integration testing with continuous integration.

Release Phase

System Testing

If the Integration Testing intends to identify interface errors, the System Testing will help to identify whether the system as a whole complies with the specified requirements. It takes the testing to the next level comparing with the Integration Testing.

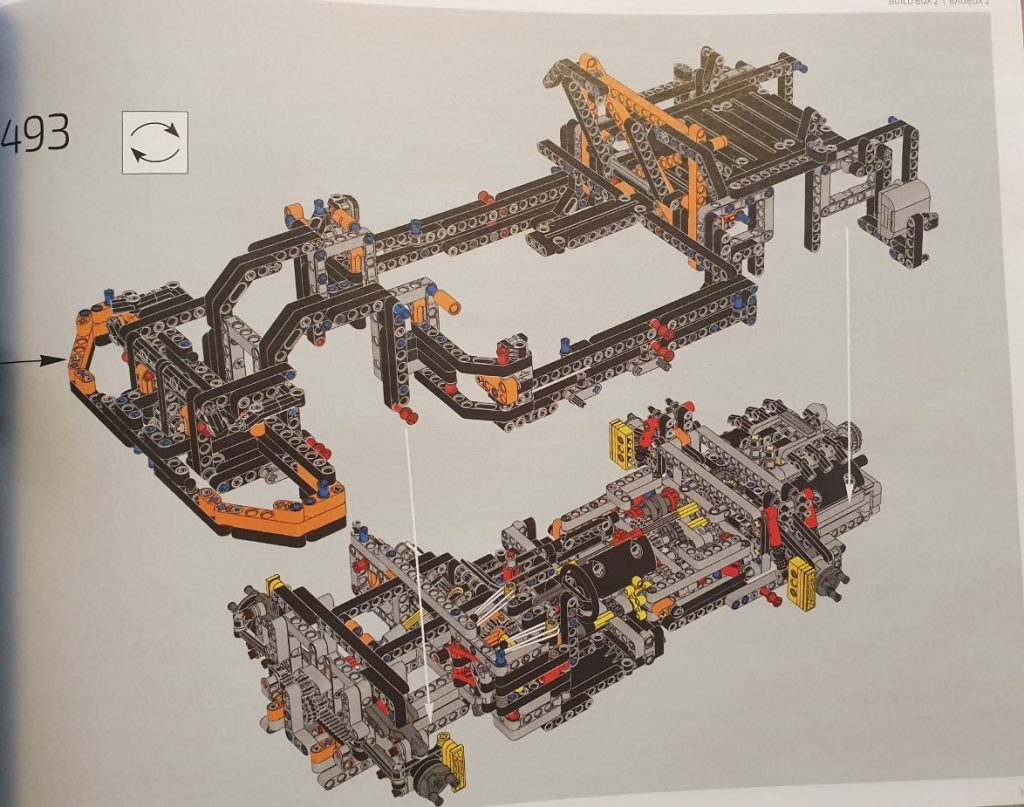

We can use the pictures below to present the differences between these two type of testings. I took two pictures from my Porsche 911 (yep, the Lego one, not a real one) user instructions. When I assembled two components together, I conducted an “Integration Testing” as to test whether these two components can connect to each other as expected.

On the other hand, I conducted a “System Testing” when I finished assembling the whole car, to make sure the car can perform the fancy functions as Lego advertised.

Hopefully this analogy clarifies the difference between Integration Testing and System Testing. However, when I mentioned “I conducted a system testing”, it’s kind of vague. System Testing represents a level of testing, but what type of testings can we conduct on this level?

High level wise, we can group all types of system testings into Functional Testing and Non-Functional Testing buckets (yep, no more Lego, back to the CICD now). Below are common testings under these two buckets:

Functional Testing

- Feature testing

- Smoke Testing

- Regression Testing

Non-Functional Testing

- Usability Testing

- Performance Testing

- Security Testing

- Compliance Testing

Among all levels of testings, System Testing contains the most types and methods of testings. We will not drill deep into each type in this article. For more details about the types of System Testings, please refer to Software Testing Fundamentals – System Testing

Acceptance Testing

Acceptance Testing is the final testing before the deployment. In the waterfall practice, it’s usually the last step/level of testing. In a typical four-level testing structure, it sits on level 4. However, in the agile practice bond with CI/CD lifecycle it may not be the last level. Hence we added a level 5 tier in our mapping diagram. We’ll discuss more about what’s in level 5 in the Deploy Phase section.

Depends on whether it’s an in-house development or a B2C development, the party who execute the acceptance testing may vary. From this angle, we can categorize the acceptance testing to two types: Internal Acceptance Testing and External Acceptance Testing.

Internal Acceptance Testing

Internal Acceptance Testing can be conducted for either in-house development or B2C development. For in-house development scenario, an internal team will conduct the acceptance testing. Usually it’s the team who are not involved in the development, e.g. members of product management. Once they passed the acceptance testing, the development team can move on to the deployment phase.

For B2C development scenario, the internal acceptance testing is optional. If you choose to do it, you’ll still have to do the external acceptance testing from the customer side before moving to the deployment. Sometimes it’s debatable as it may seem to be an “gild the lily” exercise. However, if we look at it from the “fail fast” point of view, it does have its value. For example, it usually takes longer time for the acceptance testing to cycle through the customer side back to the development team. So having an internal acceptance testing may potentially speed up the overall acceptance testing.

External Acceptance Testing

External Acceptance Testing, as it’s named, should be conducted from the customer side. The scope of external acceptance testing aligns to the requirement definition. From the pass rate point of view, it doesn’t have to be 100% pass. In some cases, even after many rounds/levels of testings, there will still be defects detected during the acceptance testing phase. Depends on the definition of the entry criteria, time and other factors, the development company and the customer can choose to selectively fix some defects, e.g. the high and medium ones, before moving to the deployment.

For a good breakdown and examples of of acceptances testing, a recommended article is : What is User Acceptance Testing (UAT) with examples.

Deploy Phase

In the B2C scenario discussed in the section above, the customer who conducted the acceptance testing may not be the end user themselves. In some cases, they may only represent the admin user groups. Their clients are the actual end users of the product. This is one of the reasons why we extended the testings to level 5 that contains Canary Testing, A/B Testing and more.

Canary Testing:

The Canary Testing originated from the mining industry. Coal miners took a canary with them in the mine to detect odorless but toxic gases. Fortunately technologies have taken the place of canaries in this scenario. But the terminology has been retained.

In the CI/CD lifecycle, once we completed the Acceptance Testings, a new feature is ready for deployment to production environment. It may be bug free, may be not. If there is an undiscovered defect, how will it impact the real users. We don’t know because we didn’t have real end users involved in any earlier level of tests.

By using the Canary Testing, we can deploy the new feature to a small portion of the fleet and expose it to a small group of users. If there is a defect, only a small percentages of users are affected, and it can be fixed or rolled back quickly. It minimized the risk during the deployment stage. It’s particular important when the new feature will face a large volume of end users.

Canary Testings can be conducted in many ways. One of the popular ways is to use Feature Flags. We’ll talk more about the feature flag in a separate article later.

A/B Testing:

A/B Testing is a method to compare two versions of a product (e.g. an application or web page) to determine which one performs better. The two versions here refer to the current version (A=Control) and the new version (B=Variation).

While this testing method often used in the IT industry, it also has a longer history. For example agricultural experiments, such as using different fertilizers in different lands to compare the harvest outcome is a common use case of A/B Testing. This article may help on understanding the A/B Testing: A Refresher on A/B Testing.

Back to the IT world, an example of A/B Testing can be that we set different colors for a button on a web page. By comparing the click-through rate, it’ll help on determining which color can bring more click-through’s. We can then apply the winner color to this button or potentially other buttons throughout the website.

Often people question the differences between A/B Testing and Canary Testing. Are these two the same? No, they are different types of testings. We’ve summarized a few key differences below.

- A/B Testing focuses on users’ response on a working feature, e.g. the color of a button; Canary Testing focuses on whether a new feature works, e.g. whether the button works.

- A/B Testing can allocate various percentages of users to A or B version of the feature, e.g. 50-50 or 80-20; Canary Testing usually only allocate small percentage of users to the new feature, like the size of a canary.

- A/B Testing can be stateful or stateless; Canary Testing should be stateful only. This refers to the user sessions.

Regression Testing:

Last but not least, we’d like to talk a bit about the Regression Testing. After all levels and rounds of testings, we may have detected numerous defects and applied fix to them. How do we ensure the components, integrations and end-2-end that have been previously tested still perform the say way? We will (must) use Regression Testing. As shown in the Integrated Test diagram in the earlier section, we shouldn’t limit Regression Testing only to certain stage of the CI/CD lifecycle. Instead, we should apply it across all stages of the lifecycle.

Summary

The main purpose of this article is to highlight that testings should be conducted across the whole CI/CD lifecycle. The add-on topic is that the traditional 4-level testings may not be enough. Hence we added level 5 on top of it to reflect the testing-in-production part.

The testing methods covered in this article only represent a portion of the overall testing family. There are many other testings methods available for various situations. Regardless which testings methods we choose to use, they all serve the same purposes: verifying the fulfillment of requirements and managing risks.