In our Cloud Product Mapping article, we’ve mapped Compute – FaaS services provided by AWS, Azure and Google Cloud (GCP). We’ll compare these three vendors’ FaaS services with more details in this article.

About Serverless and FaaS

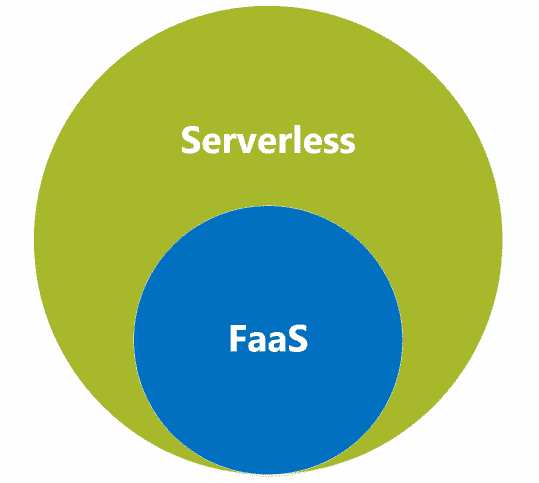

Before we start the service comparison, let’s do a high-level conceptual comparison first. This will help us clarify the scope of the services that we need to compare. The two concepts we need put side by side are Serverless and FaaS. We often hear people saying that Serverless and FaaS are the same thing. Is it so?

Let’s test it by looking at the following two statements and putting a true or false next to them:

- Serverless architectures always contain FaaS – False

- FaaS are always counted as serverless – True

The evidence to prove the incorrectness of the first statement is that there are many serverless architecture building blocks that don’t contain FaaS. One example is AWS Fargate based building block. We can count it as a serverless architecture, but it doesn’t need to have any FaaS involved. FaaS are often considered as short lived, ephemeral, stateless and single purpose. These features don’t/can’t represent wider serverless services’ nature.

For the second statement, we’d like to borrow Wikipedia’s FaaS definition to support this statement: “Function as a service (FaaS) is a category of cloud computing services that provides a platform allowing customers to develop, run, and manage application functionalities without the complexity of building and maintaining the infrastructure typically associated with developing and launching an app. Building an application following this model is one way of achieving a “serverless” architecture“. The highlighted part says it all.

So we can summarise this relationship between Serverless and FaaS by using this diagram.

In other words, we can say that Serverless and FaaS are in the parent-child relationship. The services we we are going to compare in this article are in the FaaS scope, i.e.

FaaS Service Offerings

Given it’s Function as a Service, the naming of Azure Function service and GCP Cloud Function service are pretty straight forward. But why Lambda? Following AWS’s typical naming convention of their services, should it be called something like Serverless Compute Cloud (SC2), or Elastic Function Cloud (EFC)? Instead, AWS derived the name Lambda from Lambda Calculus. This name indicates it’s a computation function in a way. Given AWS is the first CSP launching the FaaS service in the market (back in 2014), the freedom of naming choice is theirs. It somehow reminded us Elon Musk’s Tesla.

Enough said(distraction) about their names, let’s drill down to the service offering comparison. As usual, we’ll do the side-by-side comparison among these FaaS offerings across the following areas:

AWS

Lambda

Plans

Runtime

- Node.js

- Python

- Java

- C#

- F#

- Go

- Ruby

- PowerShell

- Custom Runtime

Orchestration

Monitoring

Triggers

- HTTP

- Timer

- Alexa

- Apache Kafka

- API Gateway

- Application Load Balancer (ALB)

- CloudFormation

- CloudFront

- CloudWatch Logs

- CodeCommit

- CodePipeline

- Cognito

- Config

- Connect

- DynamoDB

- Elastic File System (EFS)

- EventBridge

- IoT Events

- Kinesis

- Kinesis Data Firehose

- Lex

- MQ

- Simple Email Service (SES)

- Simple Notification Service (SNS)

- Simple Queue Service (SQS)

- Simple Storage Service (S3)

- S3 Batch

- Secrets Manager

- X-Ray

Azure

Functions

Plans

Runtime

- Node.js

- Python

- Java

- C#

- F#

- TypeScript

- PowerShell

- Custom Handlers

Orchestration

Monitoring

Triggers

- HTTP

- Timer

- Blob Storage

- Cosmos DB

- Event Grid

- Event Hub

- Queue Storage

- Service Bus Queue

- Service Bus Topic

GCP

Cloud Functions

Plans

Runtime

- Node.js

- Python

- Java

- C#

- F#

- Go

- Ruby

- PHP

Orchestration

Monitoring

Triggers

- HTTP

- Cloud Pub/Sub

- Cloud Storage

- Firebase (1st Gen)

- Eventarc (2nd Gen)

Plans

From the function plans aspect, AWS and GCP’s approaches are similar. Both of them try to cover FaaS scenarios as much as possible in their default plan, like a swiss army knife. Within their “swiss army knives” AWS has taken a further step by offering additional features like Provisioned Concurrency. This feature intends to address the “cold start” situation by preparing pre-initialized function execution instances upfront. GCP on the other hand tries to increase their single function instance’s capability to handle more concurrent requests in their 2nd Gen.

Azure approached it differently comparing with AWS and GCP. Azure provides three different FaaS plans, i.e. Consumption Plan, Premium Plan and Dedicated Plan. It’s like disassembling one swiss army knife into three different ones, some are basic and simple, some with advanced features, trying to address different needs. For example, to address the cold start, users can choose the Premium or Dedicated plans instead of the Consumption plan.

Both approaches have their own pros and cons. On one hand, providing too many options for users to select may cause confusion and sometimes frustration. For example, the frustration triggered by seeing a message like “This feature is not supported for your current plan” in your selected Azure Functions plan. Azure makes the switching plan process not to hard to follow, but still consumes time and effort to switch between plans.

On the other hand, can AWS and GCP keep expanding their all-in-one swiss army knife? AWS as the pioneer of FaaS provided an interesting use case, the Lambda@Edge. Lambda@Edge in theory is a feature of CloudFront(*). But the pricing calculation still follows the typical Lambda calculation, i.e. compute charges + request charges. So should it be counted as another Lambda plan instead of a CloudFront feature?

All three CSPs have their approaches on offering their FaaS plans. To choose the most suitable FaaS, we’ll need to look further inside these offerings.

Runtime

AWS Lambda, Azure Functions and GCP Cloud Functions all natively support a range of common runtimes, as listed in the comparison table above. The ways how these CSPs listed the supported runtimes are slightly different. For example, Azure has F# listed as supported language but AWS and GCP haven’t specifically called it out. But given all three CSPs support .NET Core 3.1 and F# is now considered a first-class citizen on .NET core (with FSI bundled), we chose to put F# in for all three CSPs’ runtime list.

The way they listed the supported versions and deprecating versions are different too. We won’t drill down to the version level to compare them. The details are very well documented in these CSPs’ official site as below:

Another difference is how they handle the custom runtimes, i.e. how to handle the languages that are not supported natively. Both AWS Lambda and Azure Functions support users to build their own runtime, e.g. AWS’s Lambda Runtime API and Lambda Layers and Azure Function’s Custom Handlers. GCP on the other hand doesn’t support custom runtimes, yet. The workaround is to run the custom runtime in GCP App Engine instead. But App Engine is a different service offering than the Cloud Functions. So from the custom runtime support aspect, GCP is one step behind AWS and Azure.

Orchestration

As we mentioned earlier, FaaS are considered as short lived, stateless and single purpose. Functions created within these FaaS services can be considered as independent “dots”. So how do we connect these dots? All three CSPs offer their workflow services to help users orchestrate and integrate the dots, e.g. AWS Step Functions, Azure Logic Apps and GCP Workflows.

These three services support the same programming paradigm (i.e. declarative) that enables users to describe their workflows. From the UX aspect,

- GCP took the “code-first” approach. In other words, GCP users need to write their workflow code first (in JSON or YAML) and the system can then visualise them.

- AWS used to only offer “code-first” approach as well where users need to use JSON-based Amazon States Language (ASL) to code their workflow first. But AWS then launched the Step Functions Workflow Studio that offers users the low-code option.

- Azure’s Logic Apps supports both Designer view or Code view. They then enhanced the code part by adding support from Visual Studio, VS Code, Bicep, etc.

In this article our focus is on FaaS, so we won’t drill too deep to compare these workflow services. We’ll write a separate article to compare them instead.

In addition to the workflow services mentioned above, we’d also like to mention another option Azure provided, Azure Durable Functions. Durable Functions is considered as an extension of Azure Functions. It’s built on top of the Durable Task Framework that enables users to define their workflows in the imperative way. And it supports multiple languages to ensure it works with programming languages used in Azure functions. From this point of view, Azure provides more options to support the orchestration of their FaaS functions comparing with AWS and GCP.

Triggers

A trigger is a declaration that a function is interested in certain event(s). When we associate a trigger with a function, the function can then capture and act on these events. From the trigger-to-function relationship aspect, these three CSPs have different setup. In AWS, one lambda function can be associated to multiple triggers. In Azure and CGP, one function can only be associated to one trigger.

From the trigger type aspect, we can group them into

- HTTP

- Timer

- Native Service Integration

HTTP as the “get out of jail free card” are supported by all three CSPs. Timer wise, we can use AWS’s CloudWatch Events, Azure’s built-in Timer Trigger template and GCP’s Cloud Scheduler + Pub/Sub to schedule the execution of our functions. When it comes to the native service integration, the differences are big.

As shown in the comparison table above, AWS is leading when it comes to Lambda’s integration to other native services. When AWS integrate all those listed services to Lambda, the method of invocation types vary. They can be grouped into event-driven invocation (e.g. S3), Lambda polling (e.g. SQS) and special integration (i.e. EFS Integration and X-Ray Integration). The event-driven invocation can be further grouped into Synchronous Invocation and Asynchronous Invocation. Sounds a bit complicated, but not difficult to implement.

From Azure Functions side, the natively integrated service list is shorter, but Azure throws in a Binding feature that can be used together with the trigger. As we mentioned earlier, we can only associate one trigger to an Azure function. But we can bind multiple bindings to an Azure function. It enables us to trigger a function from one service but get data from multiple bound resources. Another difference between triggers and bindings is that trigger only has one direction and it’s always the inbound direction. On the other hand, bindings’ directions can be in, out or inout. It enables users to mix and match different bindings to suit their need. For more information about bindings, see Azure Functions Triggers and Bindings.

GCP’s trigger list looks to be the shortest initially. In Cloud Functions 1st Gen, GCP had some extra triggers from Firebase, e.g. Firebase Realtime Database triggers, Firebase Authentication triggers, etc. However, the list didn’t go that far given Firebase is a mobile application development platform. This situation has changed since GCP introduced their Cloud Functions 2nd Gen. In their 2nd Gen, Eventarc triggers are added to the list. It enables users to invoke functions via any event types supported by Eventarc. This has boosted the list of supported services to 90+. The temporary catches are that Eventarc doesn’t support direct events from Firestore. Users need to go back to 1st Gen to use those events. Hopefully GCP will improve these areas and make the Eventarc the one-stop shop for event triggers.

Monitoring

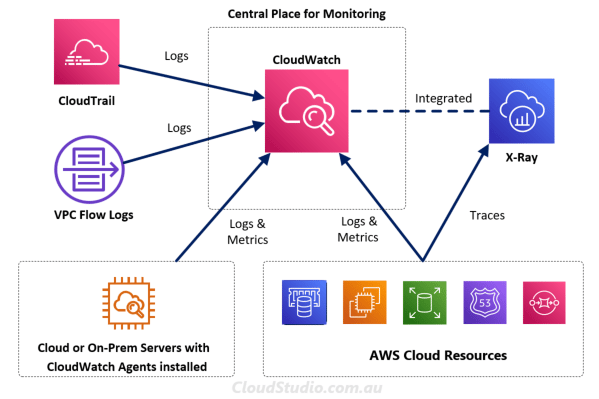

Once we have the functions orchestrated and triggers bound, the next question is how do we get insight on what happens during each invocation and event. AWS has provided a number of options for this purpose. It includes Lambda Insights in CloudWatch, CodeGuru Profiler and X-Ray. As we discussed in our Cloud Monitoring Comparison article, logs, metrics and traces are three key inputs for monitoring the cloud services (Lambda in this case). We’ll borrow the diagram we drew from that article:

Lambda as one of the AWS Cloud Resources (from the bottom right box), its logs, metrics and traces are collected by the combo of CloudWatch and X-Ray. We can use these two monitoring services to gain thorough insights of our Lambda functions individually or in an orchestrated way. CodeGuru takes one step further by adding ML-powered recommendations to help improve Lambda functions’ quality and performance.

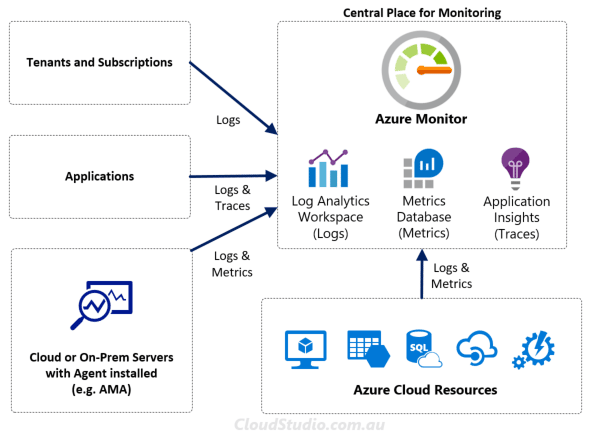

From Azure side, it offers built-in integration with Application Insights where telemetry data is sent to the connected Application Insight instance. The data include logs generated by the Functions host, traces written from the function code and performance data. The list of supported features are available at Application Insights for Azure Functions Supported Features. In addition to the Application Insights, there are more we can leverage to monitor how functions are running.

As shown above, Application Insights is only one part of the Azure Monitor. We can also analyse Azure Function metrics using the Azure Monitor Metrics Explorer and analyse logs using the Log Analytics Workspace. With this combo, all three observability pillars from Azure Functions are covered as well.

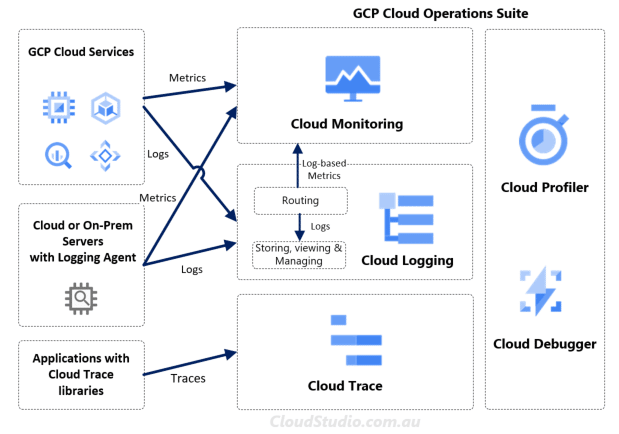

GCP also has its combo, being Cloud Monitoring, Cloud Logging and Cloud Trace, as shown below. This combo used to be called Stackdriver, now called Cloud Operating Suite. For more information, see our Cloud Monitoring Service Comparison article.

Cost

Service cost is an important factor for CSP selection and architectural design. For FaaS services, the costs from all three CSPs can be broken down into two parts:

- the quantity of invocation

- the duration of execution

To encourage experiments and proof of concepts, all three CSPs provide free tiers. As usual, let’s take a look at what’s free first.

| CSP | Free Invocation | Free Execution |

|---|---|---|

| AWS | 1 million | 400,000 GB-seconds |

| Azure | 1 million | 400,000 GB-seconds |

| GCP | 2 million | 400,000 GB-seconds |

These free tiers are pretty generous for experiments or poof of concepts. These free tiers are also perpetual, meaning we can always deduct these free quotas from our ongoing monthly costs. Beyond these bars, the costs will vary per CSP, per service plan, per region as well as other factors, e.g. storage, networking, etc. So comparing the dollar amount becomes almost impossible. However, there are some high-level differences. We’ll pick a few to talk about.

From the invocation side, the costs from Azure and GCP are non-region specific. Meaning regardless which region the function is hosted, the unit cost is the same. For instance, Azure Consumption Plan charges $0.20 per million invocation and GCP charges $0.40 per million. On the the hand, AWS’s unit price vary for some regions. Majority of regions have the unit costs as $0.20 per million invocation, but some are dearer, e.g. $0.27 for Africa and $0.25 for Middle East.

From the execution side, what’s laying underneath the duration is the resource consumption, e.g. the allocated memory. Azure calculates the memory in a more cost-friendly way comparing with AWS and GCP. It calculates the memory consumption dynamically by rounding up to the nearest 128 MB. AWS and GCP on the other hand statically calculate the memory costs by the memory defined by users. For example, if we configured the memory as 512 MB. Even our function only used 128 MB, we are still charged for the 512 MB rate. With Azure we only pay for 128 MB. CPU is another resource being consumed. But for all the CSPs default plans, CPU is automatically configured in proportion of the allocated memory.

There are a lot more detailed variations in the cost calculation. The sample calculations in AWS Lambda Pricing, Azure Functions Pricing and GCP Cloud Functions Pricing are helpful for understanding the calculation in real cases.

Summary

In this article we’ve compared FaaS plans, runtime, orchestration, monitoring, runtime and cost among AWS, Azure and GCP. Despite all the variations, these three CSPs are all building up the ecosystems around their FaaS services. It can be a double-edge sword for some type of organizations. For organizations that have multi-cloud defined in their cloud strategy, these ecosystems can cause vendor lock in. So all the aspects we’ve compared above are only one dimension. Before choosing the vendor, we should take a step back and look at the benefits and impacts both vertically and horizontally.