Cloud-based storage service has been in the market for quite a while but shows no sign of slowing down.

“The global cloud storage market is projected to grow from $83.41 billion in 2022 to $376.37 billion by 2029, at a CAGR of 24.0% in forecast period, 2022-2029” (Ref Fortune Business Inside’s market report).

AWS, Azure and GCP are key players in this market. In this article, we will compare the storage services from these three Cloud Service Providers (CSP).

Types of Cloud Storage

As usual, let’s spend a bit time to look at the types of cloud storage before drilling into the storage service comparison.

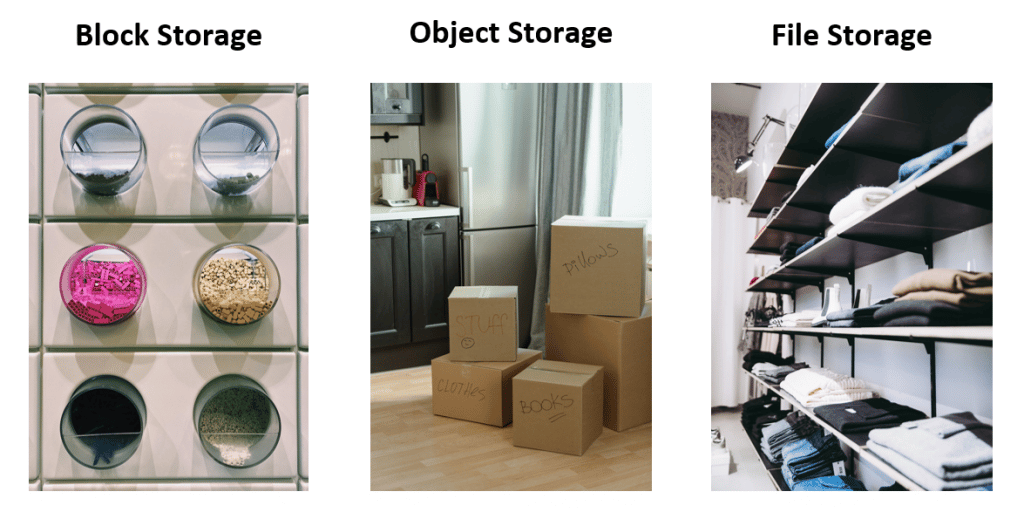

In general, there are three types of cloud data storage: block storage, object storage and file storage. By just looking at the names, it’s not easy to tell the differences directly, e.g. a file is also an object, isn’t it? Normally, we can just list out the technical definition of these three storage types. But let’s try a different way here.

Let’s consider digital data as Lego toys first. When we want to store them in Lego storage containers, these toys will be disassembled and stored as small Lego blocks. When we want these toys again, we can retrieve relevant blocks and assemble them back. If we want to change some part of the toys, the same way of working applies.

Now let’s consider digital data as clothes and see what happens. We can still store them in the Lego storage containers. By following the same way of working, we’ll have to cut our clothes into small pieces to store them. When we want to wear the clothes, we’ll need to stitch all the pieces back. But we wouldn’t want our clothes to be cut and stitched over and over again, would we?

We usually put our clothes in boxes and label the boxes, or we put our clothes in wardrobes or on shelves. When we want to wear our clothes, we will either use the label (like the metadata) to help us find the mapping boxes and get the clothes out of them, or go to relevant shelves (like the hierarchy) to find them. And picking up clothes from shelves is definitely faster than retrieving them from boxes.

If we relate this analogy back to technology terms, we can map the Lego containers to Block Storage, the boxes to Object Storage, and the shelves to File Storage. It might not be the best analogy but it’s one way to look at the differences among these three storage types. Now let’s drill into the real storage services.

Storage Service Offerings

AWS, Azure and GCP provide various cloud storage services. Most of their services can all be mapped into three storage types, as below.

AWS

Block Storage

Object Storage

File Storage

There are more data storage related services, as we’ve mapped in our Cloud Product Mapping article, but in the following sections, we’ll just focus on the ones listed above.

Block Storage

Among the three storage types, block storage is the most performing storage type. In our analogy earlier, assembling Lego blocks may take a bit time. But storage systems can do it in an astonishing short amount of time. If performance is your key requirement, block storage would be the first storage type to consider. Block storage can be further grouped into two sub-types, Hard Disk Drives (HDD) and Solid State Drives (SSD).

HDD are traditional storage devices that have platters spinning in them. I had a platter taken out from my old hard disk, as shown in the photo. It’s a bit like a smaller version of DVD (magnetized instead of burnt by laser) and it runs in a faster and more durable manner. On the other hand, SSD has no moving parts in it. It uses memory chips instead. Due to this mechanical difference, it makes SSD faster but more expensive comparing with HDD. And these natures also apply to the cloud-based block storages.

AWS, Azure and GCP’s block storage volume types can be grouped under HDD and SSD with certain level of variance as shown below.

AWS EBS volumes

- General Purpose SSD

- Provisioned IOPS SSD

- Throughput Optimized HDD

- Cold HDD

Azure Managed Disk Types

- Ultra Disk (SSD)

- Premium SSD

- Standard SSD

- Standard HDD

GCP Persistent Disk Types

- Balanced Persistent Disks (SSD)

- SSD Persistent Disks

- Extreme Persistent Disks (SSD)

- Standard Persistent Disks (HDD)

Plenty options, isn’t it. When we consider the three key factors of the block storage, i.e. Volume Size, IOPS and Throughput, it will subdivide the options further. We won’t compare them one by one here. The details are available at AWS EBS Volume Types, Azure Disk Types and GCP Storage Options. The beauty of the cloud-based block storage is that, if we happen to choose a wrong type, we can change it pretty easily and quickly. Having said that, even all three CSPs support dynamic changes on their disks types, there are some level of limitations. For example, all three CSPs don’t support downsizing disk volume dynamically. And some disk types can’t be switched to or from dynamically either. It’s recommended to read through their user guide to understand the limits beforehand to avoid being trapped by data gravity down the track.

The other thing you may have noticed from CGP’s service list is that it has an extra block storage type called Local SSD. It refers to local SSDs that are physically attached to the GCP server where the VM instances are hosted. It’s purpose is to provide super performance, e.g. very high IOPS. How super we’re looking at? For example, AWS’s max IOPS from its Provisioned IOPS SSD is 256,000 and Azure’s max IOPS from its Ultra Disk is 160,000. Let’s give some space here for the big entry from GCP. Ok, GCP’s Local SSD (via the NVMe interface) can reach up to 2,400,000 read IOPS and 1,200,000 write IOPS. It definitely blows the others out of water. However, this performance gain has its trade-offs in availability, durability and flexibility. And it’s the reason why GCP didn’t put it under the persistent disk bucket. If you need a temporary storage with super high performance and low latency, this storage type is worth of exploring.

Object Storage

Having looked at the block storage from the performance aspect, let’s take a look at the object storage. One key difference between the object storage and the block storage is that we can’t change parts of the object in the object storage. We have to write the object completely at once. Having this nature, the object storage is more suitable for requirements like storing massive and unstructured data for data analytics, distributed access, static web content, backup/restore, etc. AWS’s S3, Azure’s Blob Storage and GCP’s Cloud Storage are counterparties in this area.

AWS S3

- S3 Standard

- S3 Intelligent-Tiering

- S3 Standard-IA

- S3 One Zone-IA

- S3 Glacier Instant Retrieval

- S3 Glacier Flexible Retrieval

- S3 Glacier Deep Archive

- S3 Outposts

Azure Blob Storage

- General-purpose v2

- Block Blob

- Page Blob

GCP Cloud Storage

- Standard Storage

- Nearline Storage

- Coldline Storage

- Archive Storage

By just looking at the lists, AWS has gone the farthest. Maybe it’s related to the history of the object storage service given it was one of AWS’s earliest cloud services launched back in 2006. However, having too many options sometimes can be confusing too. Which one is the most cost-effective tier for our data?

AWS may have thought about this concern and offered the S3 Intelligent-Tiering option. It can automatically move data to the most cost-effective tier based on the access frequency. It reminds me the AUTO option on cameras. It’s the most option I’ve used on my camera. But the auto option doesn’t cover all features provided from the manual options. Similarly, this Intelligent-Tiering only moves data to limited tiers, e.g. it can’t (yet) move data to Glacier tiers.

To move data from S3 Intelligent-Tiering to other storage classes, we’ll have to use the Lifecycle Management. When it comes to the object lifecycle management, it’s no longer unique to AWS. All three CSPs provide lifecycle management functions to allow users to either transit or expire/delete objects. The details can be found at AWS-Manging your storage lifecycle, Azure-Optimize costs by automatically managing the data lifecycle, and GCP-Object Lifecycle Management.

There are many considerations required when we use the object storage services. The intelligent tiering and/or the lifecycle management are associated to one of them, i.e. cost. In addition to cost, we also need to consider scalability, security, compliance, performance and connectivity. It’s recommended to think through these factors before choosing a CSP and its object storage tiers and classes.

File Storage

The key word in the file storage is hierarchy. We are familiar with this file hierarchy in our daily life, e.g. the directories and folders. Before the cloud services became available, we’ve been using the file storage for quite a while.

When we manage our files, we often need to share files among users, especially for business users. So we had the Network Attached Storage (NAS) solution. It solved the file sharing requirements. But then the requirements keep growing, e.g. high availability, high scalability, low maintenance and PAYG. CSPs then put their hands up by providing NAS as a service in the cloud. That’s the Cloud-based File Storage service we are talking about here.

Similar to the other storage offerings, AWS, Azure and GCP also provide multiple tiers and classes for their file storage services, as mapped below.

AWS EFS

- EFS Standard

- EFS Standard-IA

- EFS One Zone

- EFS One Zone-IA

Azure Files

- Premium

- Transaction Optimized

- Hot

- Cool

GCP Filestore

- Basic

- Enterprise

- High Scale

The way how AWS group the EFS classes is from the availability and access frequency aspects, e.g. Multi-AZ vs Single AZ and Frequent Access vs Infrequent Access. By combining them together, we got four classes. Pretty straight forward. However, another key aspect needs to be considered for file storage is performance. How AWS addressed the performance aspect is by using two additional modes: Performance Mode (General Purpose or Max I/O) and Throughput Mode (Bursting or Provisioned). By combining the classes and modes together, AWS provides decent amount of file storage offerings to meet different types of requirements. More details of these classes and modes are available at AWS EFS Performance Summary.

AWS FSx is another type of file storage service. We can consider this service as fully managed Windows-based file servers. On the other hand, we can consider EFS as OS-agnostic network file system. These two file storage services have certain overlaps and may cause confusions on which one to use. Sadly AWS doesn’t provide much official guide on when to use which one. We found the Amazon FSx vs EFS: Compare the AWS file services from TechTarget shedding some good lights on the differences.

How Azure grouped their file storage tiers is directly based on performance, i.e. latency, throughput and IOPS. And it boils down to what types of storage media are used behind the scenes. For example, the Premium tier is backed by the SSD, which gives us high performance, high IOPS and low latency. The other three tiers are backed by HDD. The details are available at Azure Files Storage Tiers.

Wait, what about the availability? Well, it brings up the Azure Storage Account concept. Azure grouped both Blob Storage and File Storage (with the other two storage services) under the Storage Account. The redundancy setting is at this level. Azure offers two redundancy options: Locally Redundant Storage (LRS) and Zone-Redundant Storage (ZRS). Once we select a redundancy option from the storage account level, it’s shared by all storage services under it. More information are available at Azure Storage Redundancy.

In addition, Azure also provides an enterprise-grade file storage service called Azure NetApp Files. It addresses the next-level demanding on high performance and low latency. Azure has provided a detailed comparison article at Azure Files and Azure NetApp Files comparison.

From GCP side, they used to have two tiers: Basic and Enterprise. And they are adding one more tier called High Scale to the list but it’s still under the preview stage (as we wrote this article). CGP grouped these tiers based on performance as well as availability. We can choose HDD or SSD under the Basic tier to give us different throughputs and IOPS. If we require regional availability, we can go with the Enterprise tier. And if we want to have super performance, we can choose the High Scale tier. This tier provides even higher IOPS and throughputs than Azure NetApps Files. Sounds familiar, isn’t it. A bit like the Local SSD we covered in the Block Storage section earlier. We can almost hear GCP saying “If we can’t beat them on the breadth (yet), we’ll make sure we are ahead on the depth”.

Pricing

We always have the pricing section as a mandatory part in our cloud service comparison articles. Given there are 12 difference storage services, 38 tiers/classes and many sub-groupings underneath them, let’s make this pricing section a little lightweight. We’ll put the reference links to CSPs’ pricing pages below.

AWS

- Elastic Block Store (EBS) Pricing

- Simple Storage Service (S3) Pricing

- Elastic File System (EFS) Pricing

- FSx Pricing

Azure

GCP

Summary

Storing data in the cloud storage without planning is like rolling a snow ball. At the beginning, it might seem to be manageable. But as it gradually rolls into a bigger size, it’s often very difficult to control or reshape. So it’s critical to know the storage service well before throwing data into it. We hope this article can assist in a way.

The analogies we’ve used here may not precisely define the cloud storage services, but hopefully they can help on memorizing or better understanding these cloud storage services and options. We’ll continue to write more cloud service comparison articles for other domains. The links to all these comparison articles are available in our Cloud Product Mapping.